5 Things I Learned Building Qdrant + RAG That Aren't in the Documentation

- Türker Şentürk

- AI

- 20 Nov, 2025

- 3 min read

5 Things I Learned Building Qdrant + RAG That Aren’t in the Documentation

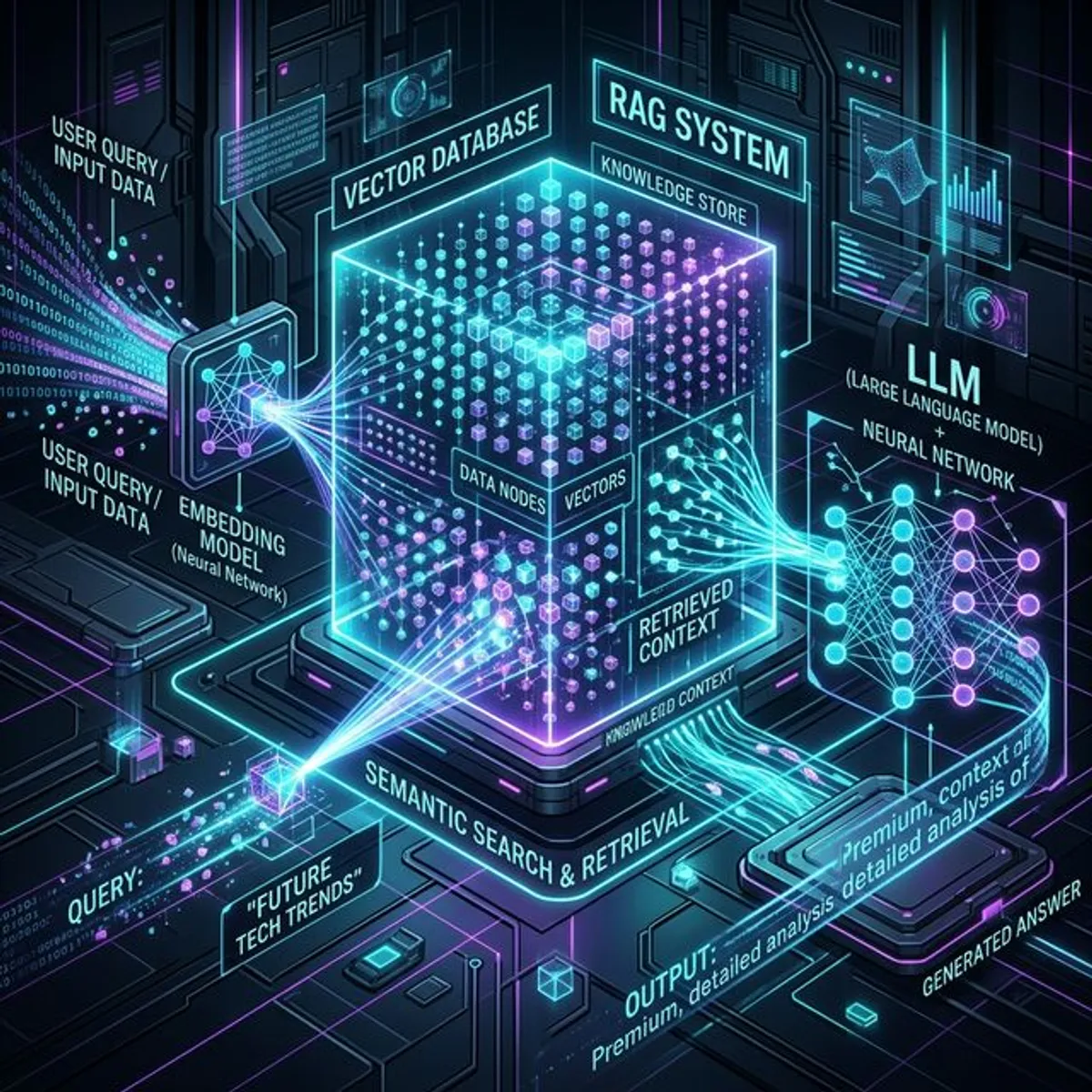

You want to design a Qdrant + RAG system. This means taking your documents, breaking them into pieces, converting them to vectors, storing them in a vector database, and pulling them out when needed using “cosine similarity.” So you’re not teaching the system anything - you’re just building your own smarter DB system.

Wait a minute, wouldn’t it be pretty much the same if you just trained an LLM? Yes, exactly… Our learning mechanism isn’t that different either. How many of us question what we’ve learned and go after something better? Or how many of us reject information we’ve already learned? Answer: none of us.

So we need to turn our data into chunks. Why? Why can’t it be in one piece? Are we afraid of increasing vector dimensions? No. By breaking documents into the smallest pieces possible, we’re actually trying to create a data map. The smaller they are, the more points and the more potential for extracting context… Okay then, why don’t we break it down word by word? No, that’s a terrible idea. Because we want what we’re breaking down to be the “SMALLEST MEANINGFUL PIECE.” Not a small meaningless piece.

Okay, now we have a knowledge cloud made from our own data. But what we’re looking for still isn’t in the knowledge cloud. What we find is still just the closest thing to what’s in this cloud in our system. Oops, there’s a problem. The closest thing it finds might have nothing to do with what I’m looking for. Of course. Remember how sometimes LLMs give you answers that have nothing to do with you? Or how a Generative AI generates an image that has nothing to do with what you wanted? It does. All of these are errors resulting from working with these distances and probabilities.

If you integrate a RAG system alongside your LLM system, you gradually design a system that contains the same information and doesn’t have to reach the LLM. Only when it gets an answer below a certain threshold value should it go to the LLM, and it should write the answer result to the vector db - this will both reduce LLM costs and allow you to build your own specialized system without depending on any high-cost fine-tuning process.

The System’s Soft Spot…

Now everything sounds incredibly good and flawless, right? It shouldn’t. Because after a while, this system can start spinning around itself like a dog trying to catch its tail and get stuck on the same things. Classic overfitting won’t let you off the hook here either. Because it always knows the same topic, it will start saying the same things like your boring relative who always talks about the same subject. The solution to this is to ensure it includes information on broader topics, also considering the sub-topics and related topics of its specialized subject.

So doing everything by the book doesn’t mean everything will be perfect :)

Tags :

Share :

Stay Ahead in Tech

Join thousands of developers and tech enthusiasts. Get our top stories delivered safely to your inbox every week.

No spam. Unsubscribe at any time.