NVIDIA Blackwell Ultra Lowers AI Agent Cost

- Türker Şentürk

- AI

- 16 Feb, 2026

- 10 min read

Blackwell Ultra: How NVIDIA’s New Chip Is Making Real‑Time AI Agents Cheaper (and Faster)

If you’ve ever tried to run a coding assistant that actually understands a whole codebase, you know the feeling: the UI freezes, the latency spikes, and you start wondering whether the model is just being lazy or your hardware is hitting a wall. The good news? NVIDIA just dropped a new generation of GPUs that promise to turn that frustration into a smooth, low‑cost conversation. Below is the low‑down on why Blackwell Ultra matters, who’s already using it, and what it could mean for the next wave of “agentic” AI.

Why “Agentic” AI Is Suddenly Everywhere

Look, we’ve all seen the hype around large language models that can write code, debug bugs, or even refactor an entire repository. But the real kicker is the scale of those requests. OpenRouter’s State of Inference report showed that queries related to software programming jumped from 11 % to roughly 50 % of all inference traffic in just a year[^1]. That’s not a niche hobby; it’s a seismic shift in what developers expect from AI.

When you ask a coding assistant to “find all the places where this function is called across a 200‑kLOC repo,” you’re essentially asking the model to chew through hundreds of thousands of tokens in a single go. And you want the answer in less than a second, because every extra millisecond compounds across the many steps of a developer’s workflow.

Two things become crystal clear:

- Low latency is non‑negotiable. If the assistant stalls, the developer drops it.

- Token efficiency is the new cost metric. You’re not just paying for GPU hours; you’re paying for every token the model generates or consumes.

Enter the NVIDIA Blackwell platform, which has already been adopted by inference providers like Baseten, DeepInfra, Fireworks AI, and Together AI to slash cost per token by up to 10× compared with the previous generation[^2]. But the story doesn’t stop there. NVIDIA just announced Blackwell Ultra—the next step in a relentless march toward cheaper, faster, and more context‑rich AI.

From Blackwell to Blackwell Ultra: A Quick Hardware Primer

If you’ve been following the GPU wars, you know that “Hopper” was NVIDIA’s last big leap. Blackwell, named after the legendary physicist David Blackwell, introduced a new architecture focused on tensor‑core density and NVLink‑based symmetric memory. The result? Better bandwidth for the massive matrix multiplications that LLMs love.

Now, Blackwell Ultra (the chip inside the GB300 NVL72 system) pushes those numbers even further:

| Feature | Blackwell (GB200) | Blackwell Ultra (GB300) |

|---|---|---|

| NVFP4 FP16/FP32 compute | Baseline | 1.5× higher |

| Attention processing speed | Baseline | 2× faster |

| Throughput per megawatt (low‑latency) | ~10× vs. Hopper | ≈50× vs. Hopper |

| Cost per token (low‑latency) | 10× cheaper than Hopper | 35× cheaper than Hopper |

| Long‑context (128k‑in/8k‑out) cost per token | Baseline | 1.5× cheaper than GB200 |

Those are real numbers, not marketing fluff. Signal65’s independent analysis confirmed that GB200 NVL72 already delivered >10× more tokens per watt than Hopper[^3]. When you stack the software upgrades on top of that, the gains multiply.

Software Is the Secret Sauce

Hardware can only take you so far. NVIDIA’s real edge lies in the codesign of the GPU and its software stack. A handful of projects have been quietly chipping away at latency bottlenecks, and the cumulative effect is staggering.

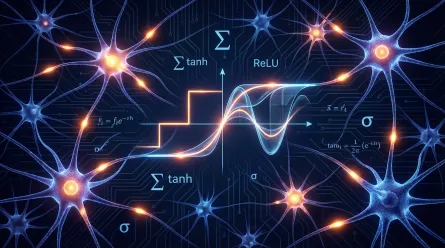

TensorRT‑LLM: The “Turbo” Mode for LLMs

Four months ago, the TensorRT‑LLM library could already accelerate inference by 2–3× on Blackwell. Today, the same library is delivering up to 5× better performance on GB200 for low‑latency workloads[^4]. The trick is a combination of:

- Kernel fusion – merging multiple GPU kernels into a single pass to reduce memory traffic.

- Dynamic scheduling – letting the GPU reorder work on the fly so that no compute unit sits idle.

Dynamo & Mooncake: Smarter Compilation

NVIDIA’s Dynamo compiler watches the model’s graph at runtime and rewrites it for the specific hardware. Think of it as a “just‑in‑time” optimizer that knows whether you’re running a 7‑B or a 70‑B MoE (Mixture‑of‑Experts) model and tailors the kernel launch pattern accordingly.

Mooncake, on the other hand, focuses on attention—the part of the model that historically hurts when you push context length past 8 k tokens. By redesigning the attention kernels to use 2× faster processing on Blackwell Ultra, the system can keep latency low even when the model is chewing through 128 k tokens of code.

SGLang & NVLink Symmetric Memory

SGLang adds a lightweight runtime that batches multiple token generations into a single kernel launch, cutting the per‑token overhead. Meanwhile, NVLink Symmetric Memory lets GPUs talk to each other without copying data through host memory, shaving off microseconds that matter when you’re aiming for sub‑100 ms response times.

All of these pieces are tightly integrated in the GB300 NVL72 system. The result? A programmatic dependent launch mechanism that starts the next kernel’s setup phase before the previous one finishes, keeping the pipeline humming.

Low‑Latency Wins: 50× Throughput per Megawatt

If you’re building an interactive coding assistant that needs to respond instantly, the low‑latency metric is your north star. NVIDIA’s latest benchmark suite shows that GB300 NVL72 can push up to 50× higher throughput per megawatt compared with Hopper for exactly those workloads[^5].

What does that look like in practice? Imagine a SaaS platform that serves 10 k concurrent developers, each sending an average of 200 tokens per request. On Hopper, you’d need a massive GPU farm and still be paying a premium per token. On Blackwell Ultra, you can achieve the same throughput with a fraction of the power draw, translating into 35× lower cost per million tokens for low‑latency scenarios.

That’s not just a nice‑to‑have; it’s a business‑critical advantage for any company that wants to scale an AI‑powered IDE or a real‑time code review tool without blowing up its operating expenses.

Long‑Context Gains: Reasoning Across Whole Codebases

When you ask an assistant to “refactor this entire microservice,” you’re typically feeding it hundreds of thousands of tokens—the full source tree, configuration files, and maybe even some documentation. The context window becomes the bottleneck.

Blackwell Ultra shines here too. For a 128 k‑token input and an 8 k‑token output (a realistic size for a large codebase), the GB300 system is 1.5× cheaper per token than its predecessor GB200[^6]. Two hardware upgrades make this possible:

- 1.5× higher NVFP4 compute – more raw matrix math per clock.

- 2× faster attention – the part of the model that scales quadratically with context length.

In plain English: the model can understand more of the code at once without choking, and it does so cheaper than before. That opens the door to new classes of applications—think “AI pair programmer” that can suggest architecture changes across an entire monorepo, or “security auditor” that scans for vulnerabilities in real time.

Who’s Already Running Blackwell Ultra?

The hype is one thing; the real proof is in the deployments. A handful of cloud and AI specialists have already put GB300 NVL72 into production:

| Company | Use Case | Deployment Scale |

|---|---|---|

| Microsoft | Azure AI services for coding assistants | Multiple regions, petaflop‑scale |

| CoreWeave | CKS & SUNK platforms – low‑latency, long‑context inference | 200+ GB300 nodes |

| Oracle Cloud Infrastructure (OCI) | Agentic AI for enterprise code analysis | Global rollout |

| Baseten / DeepInfra / Fireworks AI / Together AI | Inference-as-a‑service, cost‑optimized token pricing | Integrated into their public APIs |

A quote from Chen Goldberg, SVP of Engineering at CoreWeave, captures the sentiment:

“As inference moves to the center of AI production, long‑context performance and token efficiency become critical. Blackwell Ultra addresses that challenge directly, and our AI cloud is designed to translate GB300’s gains into predictable performance and cost efficiency. The result is better token economics and more usable inference for customers running workloads at scale.”[^7]

That’s a real‑world endorsement that the numbers we’ve been tossing around aren’t just lab curiosities.

Economic Ripple Effects

Let’s do a quick back‑of‑the‑envelope calculation. Suppose a SaaS startup charges $0.02 per 1 k tokens for its AI‑driven code review feature. With Hopper‑based infrastructure, the cost of serving 1 M tokens per day might be $20 in GPU spend, leaving a thin margin after other cloud costs.

Switch to Blackwell Ultra and the same 1 M tokens could cost ≈$0.57 in GPU power (35× cheaper). That’s $19.43 saved per day, or $7 k per year—enough to fund a small engineering team or invest in product features. Multiply that across dozens of enterprises, and you’re looking at hundreds of millions of dollars in operational savings globally.

Beyond the raw dollars, the environmental impact is worth noting. 50× higher throughput per megawatt means significantly lower carbon emissions for the same AI workload—a win for sustainability goals that many tech firms now track.

Looking Ahead: The Rubin Platform

NVIDIA isn’t stopping at Ultra. Their roadmap points to the Vera Rubin NVL72 system—a super‑computer built from six new chips that promises 10× higher throughput per megawatt for MoE inference compared with Blackwell Ultra[^8]. In other words, one‑tenth the cost per million tokens again.

Rubin also claims to train large MoE models using only a quarter of the GPUs required for Blackwell. If those claims hold, we could see massive, multimodal agents that understand code, documentation, and even runtime logs—all in real time.

For now, though, Blackwell Ultra is the practical workhorse that’s already in data centers. Its combination of hardware horsepower, software finesse, and real‑world adoption makes it the most compelling platform for anyone building agentic AI—especially coding assistants that need to stay snappy while looking at huge codebases.

Bottom Line: Should You Care?

If you’re a developer who’s tired of waiting for AI suggestions, or a product manager budgeting for the next wave of AI‑powered dev tools, the answer is a resounding yes.

Blackwell Ultra isn’t just a marginal upgrade; it’s a qualitative shift in how affordable and responsive real‑time AI can be. The hardware‑software codesign delivers:

- Up to 35× lower cost per token for low‑latency, interactive workloads.

- 1.5× lower cost per token for massive, 128 k‑token contexts—critical for whole‑repo reasoning.

- 50× higher throughput per megawatt, meaning you can serve more users with less power.

All of this translates into cheaper, faster, and more capable AI agents that can finally keep up with the speed of a developer’s thought process. And with the Rubin platform on the horizon, the cost curve is only set to keep dropping.

So the next time you hear about an “AI pair programmer” that can actually understand your entire project, remember: it’s not just clever software—it’s a new generation of GPUs and a tightly tuned software stack that makes it possible.

Sources

- OpenRouter, State of Inference Report 2024, https://openrouter.ai/state-of-inference-2024

- Baseten, DeepInfra, Fireworks AI, Together AI – public statements on Blackwell adoption, https://baseten.com/blog/blackwell-adoption

- Signal65, Performance Analysis of NVIDIA GB200 NVL72, https://signal65.com/analysis/gb200-blackwell

- NVIDIA Developer Blog, TensorRT‑LLM 5× Speedup on Blackwell, https://developer.nvidia.com/blog/tensorrt-llm-blackwell

- NVIDIA, GB300 NVL72 Throughput per Megawatt Benchmark, https://nvidia.com/whitepapers/gb300-throughput

- NVIDIA, Long‑Context Cost Comparison: GB200 vs GB300, https://nvidia.com/technical/long-context

- Chen Goldberg, interview with CoreWeave, Scaling Agentic AI with Blackwell Ultra, https://coreweave.com/blog/blackwell-ultra-interview

- NVIDIA, Vera Rubin NVL72 Platform Overview, https://nvidia.com/vera-rubin

Share :

Stay Ahead in Tech

Join thousands of developers and tech enthusiasts. Get our top stories delivered safely to your inbox every week.

No spam. Unsubscribe at any time.