Unlocking AI Potential: Fine-Tuning for Specialized Tasks

- Turker Senturk

- AI

- 15 Dec, 2025

- 4 min read

Key Highlights

- Enhanced Accuracy: Fine-tuning allows AI models to achieve higher accuracy in specialized tasks.

- Unsloth Framework: An open-source framework optimized for efficient, low-memory training on NVIDIA GPUs.

- NVIDIA Nemotron 3: A new family of open models introducing the most efficient architecture for agentic AI applications.

Imagine having an AI assistant that can handle complex tasks with precision, from managing your schedule to providing expert-level support. This is the promise of fine-tuning in AI development, where models are customized to excel in specific areas. However, achieving consistent high accuracy has been a challenge. That’s where fine-tuning comes in, and with the right tools, this process is becoming more accessible than ever.

The Power of Fine-Tuning

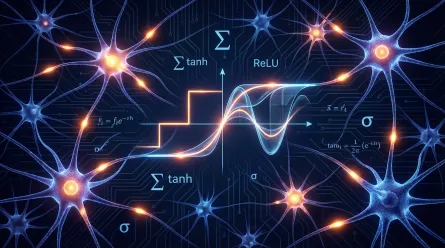

Fine-tuning is essentially giving an AI model a focused training session, with examples tied to a specific topic or workflow. This allows the model to improve its accuracy by learning new patterns and adapting to the task at hand. Choosing the right fine-tuning method depends on how much of the original model the developer wants to adjust. There are three main methods: parameter-efficient fine-tuning, full fine-tuning, and reinforcement learning. Each has its use cases and requirements, from small to large datasets, and the choice of method affects the VRAM required.

Fine-Tuning Methods and Their Applications

- Parameter-Efficient Fine-Tuning: Updates only a small portion of the model, ideal for adding domain knowledge or improving coding accuracy.

- Full Fine-Tuning: Updates all model parameters, useful for advanced tasks like building AI agents or chatbots that need to follow specific formats or styles.

- Reinforcement Learning: Adjusts model behavior using feedback or preference signals, suitable for improving model accuracy in specific domains or building autonomous agents.

Unsloth: A Fast Path to Fine-Tuning

Unsloth, one of the world’s most widely used open-source frameworks for fine-tuning large language models (LLMs), provides an approachable way to customize models. It’s optimized for NVIDIA GPUs, from GeForce RTX desktops and laptops to RTX PRO workstations and DGX Spark, the world’s smallest AI supercomputer. Unsloth translates complex mathematical operations into efficient, custom GPU kernels, accelerating AI training and making fine-tuning accessible to a broader community of AI enthusiasts and developers.

NVIDIA Nemotron 3 Family of Open Models

The newly announced NVIDIA Nemotron 3 family of open models introduces the most efficient family of open models, ideal for agentic AI fine-tuning. With models available in Nano, Super, and Ultra sizes, Nemotron 3 offers scalable reasoning and long-context performance optimized for RTX systems and DGX Spark. The Nemotron 3 Nano, in particular, is optimized for tasks such as software debugging, content summarization, and information retrieval at low inference costs.

DGX Spark: Compact AI Powerhouse

DGX Spark enables local fine-tuning and brings incredible AI performance in a compact, desktop supercomputer. Built on the NVIDIA Grace Blackwell architecture, DGX Spark delivers up to a petaflop of FP4 AI performance and includes 128GB of unified CPU-GPU memory. This allows developers to run larger models, longer context windows, and more demanding training workloads locally, without the need for cloud queues.

Why This Matters

The ability to fine-tune AI models for specialized tasks opens up endless possibilities for innovation and application. With tools like Unsloth and the NVIDIA Nemotron 3 family of open models, developers can create more accurate and efficient AI systems. As these technologies continue to evolve, we can expect to see AI become even more integrated into our daily lives, from personal assistants to professional tools. The future of AI development is not just about creating intelligent machines but about making them work better for us, and fine-tuning is a crucial step in this journey.

Source:

Tags :

Share :

Stay Ahead in Tech

Join thousands of developers and tech enthusiasts. Get our top stories delivered safely to your inbox every week.

No spam. Unsubscribe at any time.